| jSymbolic 2.2 |

|---|

Overview

jSymbolic is a software application intended for conducting research in the fields of music information retrieval (MIR), musicology and music theory. Its primary purpose is to extract statistical information from musical data stored symbolically in file formats such as MIDI or MEI. This statistical information is formulated as feature values, which may be fed directly into automatic classification systems, may be used to query large musical datasets, or may be used by musicologists and music theorists for conducting empirical musical research.

jSymbolic includes an easy-to-use GUI, and may also be used via the command line. It also has an API facilitating programmatic use. The software can be used either with its excellent general-purpose default settings, or advanced users can use it under a variety of settings (saved in configuration files).

Like all jMIR components, jSymbolic is free and open-source, and is designed to be used directly for conducting research as well as a platform for iteratively developing new features that can then be shared among researchers. As such, jSymbolic emphasizes extensibility, and includes a modular design that facilitates the implementation and incorporation of new features, as well as the automatic provision of all other feature values to each new feature and dynamic feature extraction scheduling that automatically resolves feature dependencies. jSymbolic is implemented in Java in order to maximize cross-platform utilization.

jSymbolic is also part of the SIMSSA (Single Interface for Music Score Searching and Analysis) and MIRAI (Music Information Research and Infrastructure) projects, and is integrated with the music stored on the associated SIMSSA database.

Features Extracted

jSymbolic is packaged with a library of 246 unique implemented features. Some of these are multidimensional, for a total of 1497 feature values. These features were developed through extensive analysis of publications in the fields of music theory, musicology and MIR. Most of these features had not previously been applied to MIR research, and many of them are entirely novel. The features can be loosely divided into the following seven categories:

- Pitch Statistics: How common are various pitches relative to one another, in terms of both absolute pitches and pitch classes? How tonal is a piece? What is its range? How much variety in pitch is there?

- Melodies and Horizontal Intervals: What kinds of melodic intervals are present? How much melodic variation is there? What can be observed from melodic contour measurements? What types of phrases are used and how often are they repeated?

- Chords and Vertical Intervals: What vertical intervals are present? What types of chords do they represent? How much harmonic movement is there, and how fast is it?

- Rhythm: Features are calculated based on the time intervals between note attacks and the durations of individual notes. What meter and what rhythmic patterns are present? Is rubato used? How does rhythm vary between voices?

- Instrumentation: Which instruments are present, and which are emphasized relative to others? Both pitched and non-pitched instruments are considered.

- Texture: How many independent voices are there and how do they interact (e.g., polyphonic or homophonic)? What is the relative importance of voices?

- Dynamics: How loud are notes and what kinds of variations in dynamics occur?

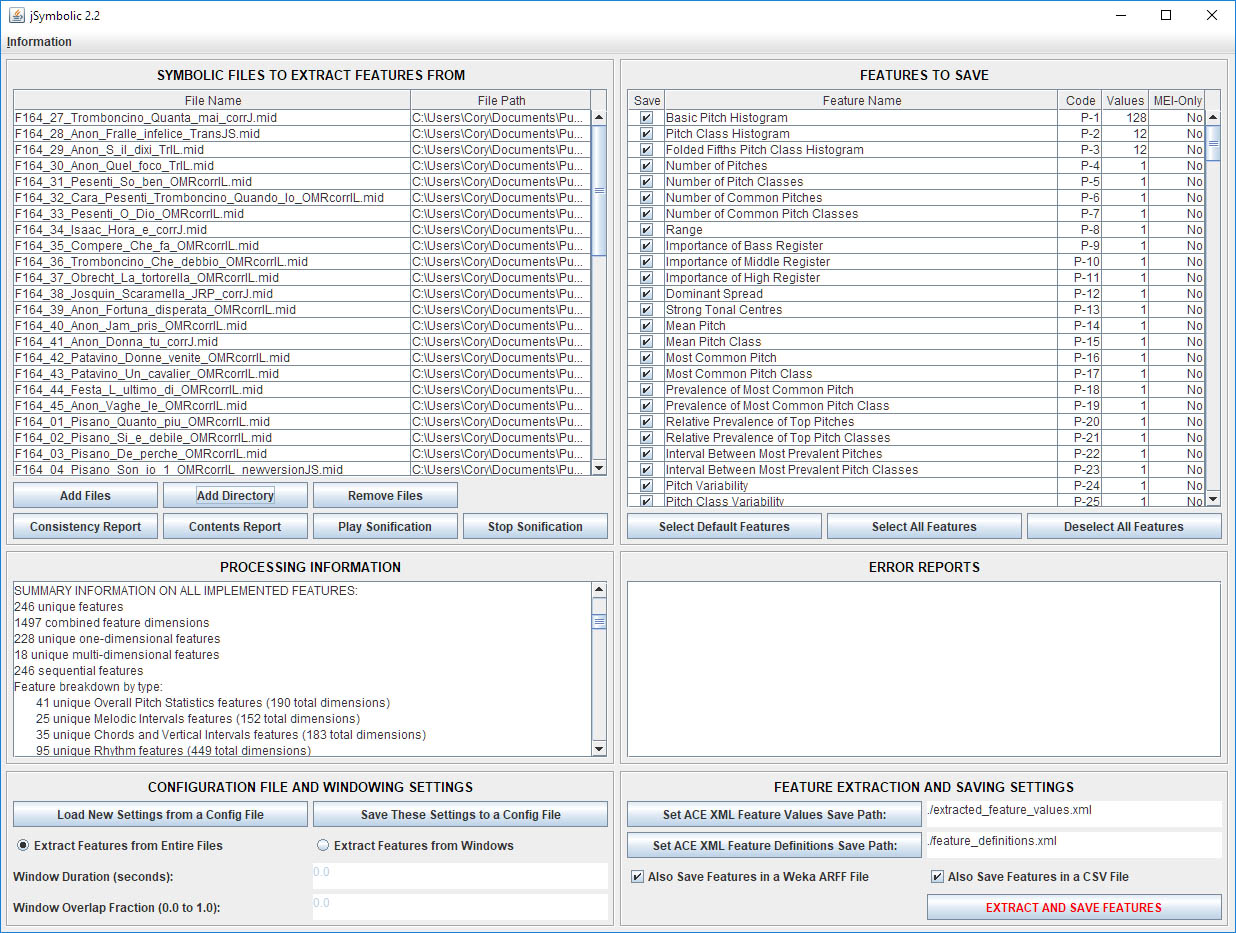

Screen Shot

Manual

Many more details on jSongMiner are available in the jSymbolic manual.

Tutorial

A step-by-step jSymbolic tutorial is also available, including worked examples.

Related Publications and Presentations

McKay, C., and M. E. Cuenca. 2024. Harmonious research collaborations in computational musicology. Presented at the Digital Technologies Applied to Music Research Conference.

McKay, C., and J. Cumming. 2024. New tools for old questions: Applying feature extraction and machine learning to Rodin�s "The Josquin Canon at 500". Presented at the Medieval and Renaissance Music Conference.

McKay, C., and J. Cumming. 2024. Using feature-based composer classification to test musicological evidence for Josquin attribution. Extended Abstracts for the Late-Breaking Demo Session of the 25th International Society for Music Information Retrieval Conference.

Cuenca, M. E., and C. McKay. 2023. The stylistic origin of the anonymous 16th century masses transcribed by Siro Cisilino (1903-1987) at the Fondazione Cini: A statistical and machine learning approach. Presented at the Medieval and Renaissance Music Conference.

McKay, C. 2023. Feature extraction, feature-indexed databases, features in musicology and evolution with feature; Also, features. Presented at the CIRMMT Scientific Event, McGill University, Montreal, Canada. 25 May 2023.

McKay, C. 2023. From jSymbolic 2 to 3: More musical features. Proceedings of the International Symposium on Computer Music Multidisciplinary Research. 752–755.

McKay, C., J. Cumming, and I. Fujinaga. 2023. Rhythmic, melodic and vertical n-gram features as a means of studying symbolic music computationally. Presented at the Digital Humanities Conference.

McKay, C., and R. Mizrahi. 2023. SIMSSA DB: Go Jump in the (Data) Lake. Presented at the LinkedMusic Workshop, McGill University, Montreal, Canada. 21 October 2023.

Cuenca, M. E., and C. McKay. 2022. Musical influences on the masses of Pedro Fern�ndez Buch (c. 1574- 1648): A stylistic comparison using statistical analysis. Presented at the Medieval and Renaissance Music Conference.

McKay, C., and M. E. Cuenca. 2022. Influencias musicales en las misas y motetes de Cristóbal de Morales y Francisco Guerrero: Una aproximación estadística. In Musicología en transición, eds. J. Marín-López, A. Mazuela-Anguita and J. J. Pastor-Comín, 1031–1052. Madrid, Spain: Sociedad Española de Musicología.

McKay, C. 2022. SIMSSA DB: An introduction. Presented at the CIRMMT LinkedMusic Workshop on Music Databases, McGill University, Montreal, Canada. 18 November 2022.

McKay, C. 2022. SIMSSA DB: Some details. Presented at the LinkedMusic Prjoect Meeting, McGill University, Montreal, Canada. 19 November 2022.

McKay, C., and J. Cumming. 2022. Summary features as the basis for content-based queries of symbolic music repositories. Presented at the Congress of the International Association of Music Libraries, Archives and Documentation Centres.

Vatolkin, I., and C. McKay. 2022. Multi-objective investigation of six feature source types for multi-modal music classification. Transactions of the International Society for Music Information Retrieval 5 (1): 1–19.

Vatolkin, I., and C. McKay. 2022. Stability of symbolic feature group importance in the context of multi-modal music classification. Proceedings of the International Society for Music Information Retrieval Conference. 469–76.

Cuenca, M. E., and C. McKay. 2021. Exploring musical style in the anonymous and doubtfully attributed mass movements of the Coimbra manuscripts: A statistical and machine learning approach. Journal of New Music Research 50 (3): 199–219.

Cuenca, M. E., and C. McKay. 2021. Influencias musicales en las misas y motetes de Crist�bal de Morales y Francisco Guerrero: Una aproximaci�n estad�stica. Presented at the Congreso de la Sociedad Española de Musicología.

Cumming, J., and C. McKay. 2021. Using corpus studies to find the origins of the madrigal. Proceedings of the Future Directions of Music Cognition International Conference. 38–42.

McKay, C. 2021. Exploring composer attribution in motet cycles using machine learning. Gaffurius Codices Online, Schola Cantorum Basiliensis.

McKay, C., and M. E. Cuenca. 2021. Musical influences on the masses and motets of Crist�bal de Morales and Francisco Guerrero: A statistical approach. Presented at the Medieval and Renaissance Music Conference.

Rodriguez-Garcia, E., and C. McKay. 2021. Ave festiva ferculis: Exploring attribution by combining manual and computational analysis. Presented at the Medieval and Renaissance Music Conference.

Rodríguez-García, E., and C. McKay. 2021. Composer attribution of Renaissance motets: A case study using statistical features and machine learning. In The Anatomy of Iberian Polyphony around 1500, eds. E. Rodríguez-García and J. P. d’Alvarenga, 401–38. Kassel, Germany: Edition Reichenberger.

McKay, C., R. Adamian, J. Cumming, and I. Fujinaga. 2020. Exploring Renaissance music using n-gram aggregates to summarize local musical content. Presented at the Medieval and Renaissance Music Conference.

McKay, C., J. Cumming, and I. Fujinaga. 2020. Lessons learned in a large-scale project to digitize and computationally analyze musical scores. Digital Scholarship in the Humanities.

Cuenca, M. E., and C. McKay. 2019. An�lisis estad�stico de misas ib�ricas renacentistas a trav�s del software jSymbolic. Presented at the El análisis musical actual: Marco teórico e interdisciplinariedad conference.

Cuenca, M. E., and C. McKay. 2019. Exploring musical style in the anonymous and doubtfully attributed mass movements of the Coimbra manuscripts: A statistical approach. Presented at the Medieval and Renaissance Music Conference.

Cumming, J., C. McKay, N. Nápoles López, and S. Margot. 2019. Contrapuntal style: Pierre de la Rue vs. Josquin Des Prez. Presented at the CIRMMT Workshop on SIMSSA (Single Interface for Music Score Searching and Analysis), McGill University, Montreal, Canada. 21 Saturday 2019.

Hopkins, E, Y. Ju, G. Polins Pedro, C. McKay, J. Cumming, and I. Fujinaga. 2019. SIMSSA DB: Symbolic music discovery and search. Poster presentation at the International Conference on Digital Libraries for Musicology.

Hopkins, E., G. Polins Pedro, Y. Ju, C. McKay, J. Cumming, and I. Fujinaga. 2019. SIMSSA DB: A brief overview of the data model. Presented at the DACT (Digital Analysis of Chant Transmission) Workshop, McGill University, Montreal, Canada. 21 Saturday 2019.

Ju, Y., G. Polins Pedro, C. McKay, E. Hopkins, J. Cumming, and I. Fujinaga. 2019. Enabling music search and analysis: A database for symbolic music files. Presented at the Music Encoding Conference.

McKay, C., and M. E Cuenca. 2019. CRIM, machine learning and big data: A case study on the Coimbra manuscripts. Presented at the Counterpoints: Renaissance Music and Scholarly Debate in the Digital Domain conference.

McKay, C., E. Hopkins, G. Polins Pedro, Y. Ju, A. Kam, J. Cumming, and I. Fujinaga. 2019. A collaborative symbolic music database for computational research on music. Presented at the Medieval and Renaissance Music Conference.

McKay, C., J. Cumming, and I. Fujinaga. 2019. Lessons learned in a large-scale project to digitize and computationally analyze musical scores. Presented at the Digital Humanities Conference.

McKay, C., and R. Adamian. 2019. jSymbolic in 2019: Updates and improvements. Presented at the CIRMMT Workshop on SIMSSA (Single Interface for Music Score Searching and Analysis), McGill University, Montreal, Canada. 21 Saturday 2019.

McKay, C. 2019. SIMSSA DB: A collaborative musicological research database. Presented at the Digital Humanities Conference Digital Musicology Study Group.

Cumming, J., and C. McKay. 2018. Contrapuntal style: Josquin Desprez vs. Pierre de la Rue. Presented at the Conference on Pierre de la Rue and Music at the Habsburg-Burgundian Court.

Cumming, J., C. McKay, J. Stuchbery, and I. Fujinaga. 2018. Methodologies for creating symbolic corpora of Western music before 1600. Proceedings of the International Society for Music Information Retrieval Conference. 491–8.

Cumming, J., and C. McKay. 2018. Revisiting the origins of the Italian madrigal using machine learning. Presented at the Medieval and Renaissance Music Conference. 35.

McKay, C., J. Cumming, and I. Fujinaga. 2018. jSymbolic 2.2: Extracting features from symbolic music for use in musicological and MIR research. Proceedings of the International Society for Music Information Retrieval Conference. 348–54.

McKay, C. 2018. jSymbolic: A software application for music information retrieval and analysis. Invited Speaker. CESEM, Nova University of Lisbon, Lisbon, Portugal. 8 March 2018.

McKay, C. 2018. Performing statistical musicological research using jSymbolic and machine learning. Presented at The Anatomy of Polyphonic Music around 1500 International Conference.

McKay, C. 2018. SIMSSA DB: A database for computational musicological research. Presented at the International Association of Music Libraries, Archives and Documentation Centres International Congress SIMSSA Workshop.

McKay, C., J. Cumming, and I. Fujinaga. 2017. Characterizing composers using jSymbolic2 features. Extended Abstracts for the Late-Breaking Demo Session of the 18th International Society for Music Information Retrieval Conference.

McKay, C., T. Tenaglia, J. Cumming, and I. Fujinaga. 2017. Using statistical feature extraction to distinguish the styles of different composers. Presented at the Medieval and Renaissance Music Conference.

McKay, C., T. Tenaglia, and I. Fujinaga. 2016. jSymbolic2: Extracting features from symbolic music representations. Extended Abstracts for the Late-Breaking Demo Session of the 17th International Society for Music Information Retrieval Conference.

McKay, C. 2012. Classifying music with jMIR. Invited Speaker. Department of Languages and Science of Computation, University of Malaga, Spain. 10 January 2012.

McKay, C. 2010. Automatic music classification with jMIR. Ph.D. Thesis. McGill University, Canada.

McKay, C., J. A. Burgoyne, J. Hockman, J. B. L. Smith, G. Vigliensoni, and I. Fujinaga. 2010. Evaluating the genre classification performance of lyrical features relative to audio, symbolic and cultural features. Proceedings of the International Society for Music Information Retrieval Conference. 213–8.

McKay, C., and I. Fujinaga. 2010. Improving automatic music classification performance by extracting features from different types of data. Proceedings of the ACM SIGMM International Conference on Multimedia Information Retrieval. 257–66.

McKay, C., and I. Fujinaga. 2008. Combining features extracted from audio, symbolic and cultural sources. Proceedings of the International Conference on Music Information Retrieval. 597–602.

McKay, C., and I. Fujinaga. 2007. Style-independent computer-assisted exploratory analysis of large music collections. Journal of Interdisciplinary Music Studies 1 (1): 63–85.

McKay, C., and I. Fujinaga. 2006. jSymbolic: A feature extractor for MIDI files. Proceedings of the International Computer Music Conference. 302–5.

Questions and Comments